For many e-commerce teams, A/B testing is a standard part of optimization. You test two variants, see which one converts better, and roll out the winner.

In practice, the impact of a test often extends beyond the increase or decrease of a single metric.

What if a test “wins” on conversion, while the average order value drops? Or the number of products per order decreases? Is that really an improvement?

In this blog, we show why A/B testing only becomes valuable when you look beyond a single metric, and why AOV and UPT are essential when interpreting test results.

The most common mistake in A/B testing is optimizing based on assumptions. For example: making filters more visible will surely increase conversion. Fewer choices make purchasing easier. That sounds logical, but visitor behavior is often more complex than you think. That’s why you test.

The second pitfall often follows immediately after: only looking at conversion.

Conversion tells you how many visitors buy, but not how they buy. And that is often where the real impact of a test lies.

To properly evaluate test results, it is important to look beyond conversion alone. In practice, these metrics play a crucial role:

Together, these figures show what actually changes in visitors’ purchasing behavior.

In the first A/B test, a builder template without personalization was compared to a variant in which personalization was actively applied. In the test variant, visitors were shown a personalized grid tailored to their previous behavior.

The results were clearly positive. Not only did conversion increase, but the average order value (AOV), the number of products per order (UPT), and total revenue also rose. Visitors completed their purchases more frequently and added more products to their shopping carts.

This A/B test shows that personalization not only influences purchasing behavior, but also directly contributes to higher commercial value.

By analyzing multiple KPIs in relation to each other, it becomes clear how adding personalization can have a measurable impact on the overall result.

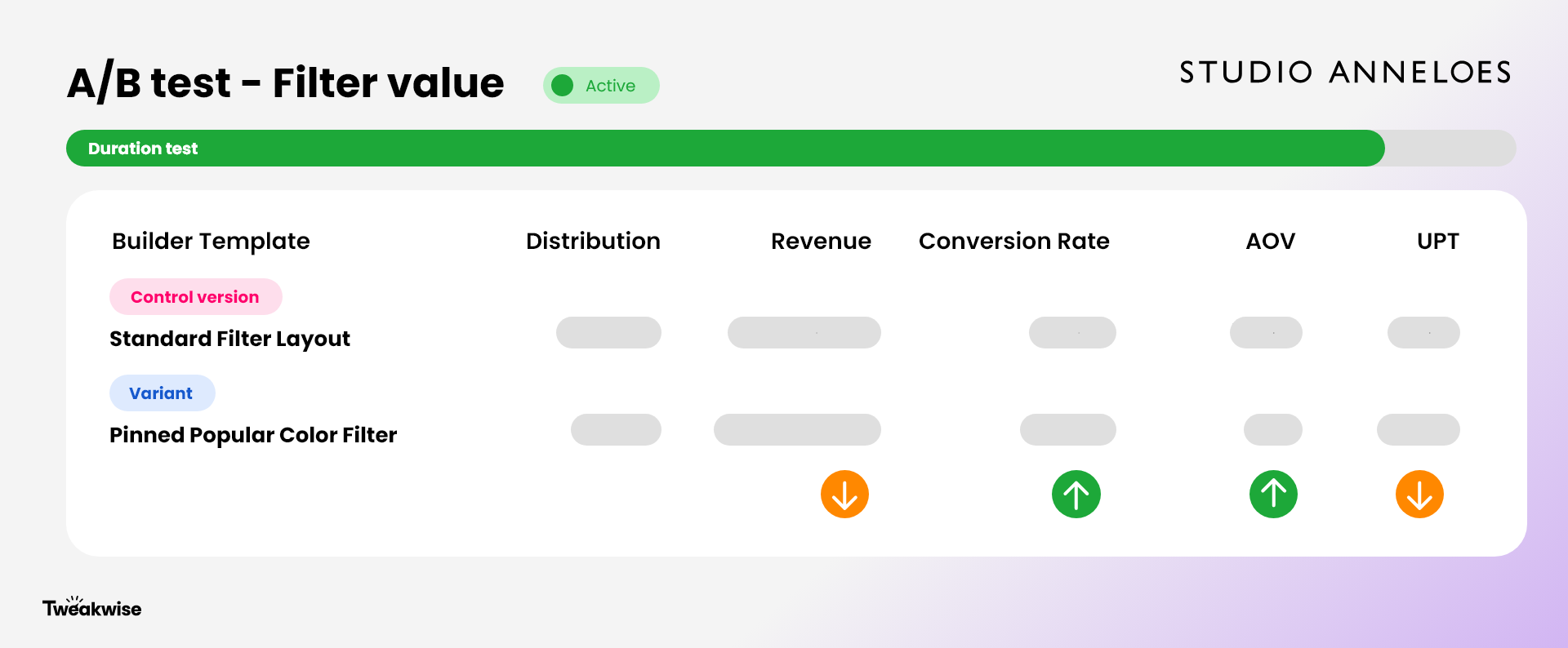

The second A/B test focused on displaying popular filter values in fixed positions within the builder template. For example, a frequently chosen color was made directly visible.

In this A/B test as well, conversion increased. At the same time, it was evident that visitors scrolled less and compared less. This resulted in a lower UPT and ultimately lower revenue.

This A/B test underlines that a higher conversion rate does not automatically mean that a change is better for the overall result. By including multiple KPIs, it becomes clear whether an A/B test contributes to the desired business objective.

An A/B test is not automatically successful just because conversion increases.

A test only becomes valuable when you understand:

Sometimes a higher conversion rate with a lower AOV is acceptable, for example with entry-level products or inventory reduction. In other cases, you want to focus specifically on value per order or per customer.

That is why A/B testing is not about winning, but about understanding.

Effective A/B testing is not about winning a test, but about improving the overall result. It is a continuous process of measuring, interpreting, and adjusting based on multiple KPIs.

The cases at Studio Anneloes show that optimizations only become truly valuable when they contribute to revenue, order value, and purchasing behavior in relation to each other.

By looking beyond conversion alone and structurally including AOV, UPT, and revenue, you are not steering on isolated metrics, but on sustainable growth.

That is the difference between testing to win and testing to grow.